Field Tests

Audience: Students and Parents

The projects below were conducted by organizations that participated in a Community of Practice to field test the messaging recommendations of the Math Narrative Project. Between April 2025 and January 2026, organizations directly engaged 6th-10th grade public school math students and their parents. Check out the messaging each organization developed, how they tested it, and what they learned about helping to better support students’ math learning.

Bob Moses Center & The Young People’s Project

In Miami, FL the Bob Moses Center for Math Literacy Through Public Education and The Young People’s Project embedded messages about student agency, normalizing mistakes, seeking help, and reframing struggle into The Young People’s Project’s peer-led afterschool math program for up to 20 6th & 7th graders facilitated by 10 of their 8th grade peers, and enhanced by videos created by the 8th graders.

Combining near-peer teaching and key messaging elevated student agency and centered emotional experience as central to math learning. 8th grade leaders and 6th/7th grade students described YPP as a space that positively shifted their emotional relationships with math, giving more autonomy and control over what was taught and how. This helped normalize discussions about the value of making mistakes, struggling in math, and asking for help. Key to the findings is that near-peers make excellent messengers!

The group discussions about the 8th graders’ math narrative videos validated the 8th graders, helping them focus and organize themselves to create the learning environment they wanted. After the videos 6th/7th graders adopted a mantra: “Humans make mistakes!”

“But when you actually ask (the 8th graders) for help they will be like, ‘Oh, you need help. I can help you right now. I don’t have nothing to do.’ They help you understand the answer correctly, and you tell them, ‘Thank you for helping me. You’re a good teacher, and I like you.’” – Gabriel

“The eighth graders try their hardest to make sure we know everything. And they try, they try their hardest to teach us.” – Trevor

Sharing the videos was pivotal. For the pre-survey, students responded with answers they expected adults to want to hear. However, when discussing, the videos, they were more open and honest.

Via debriefs, researchers’ conversations, and focus groups students articulated how they experience the messaging recommendations in YPP but not in school and teachers discussed the value in adopting peer-led learning to support messaging recommendations in the classroom.

EdLight

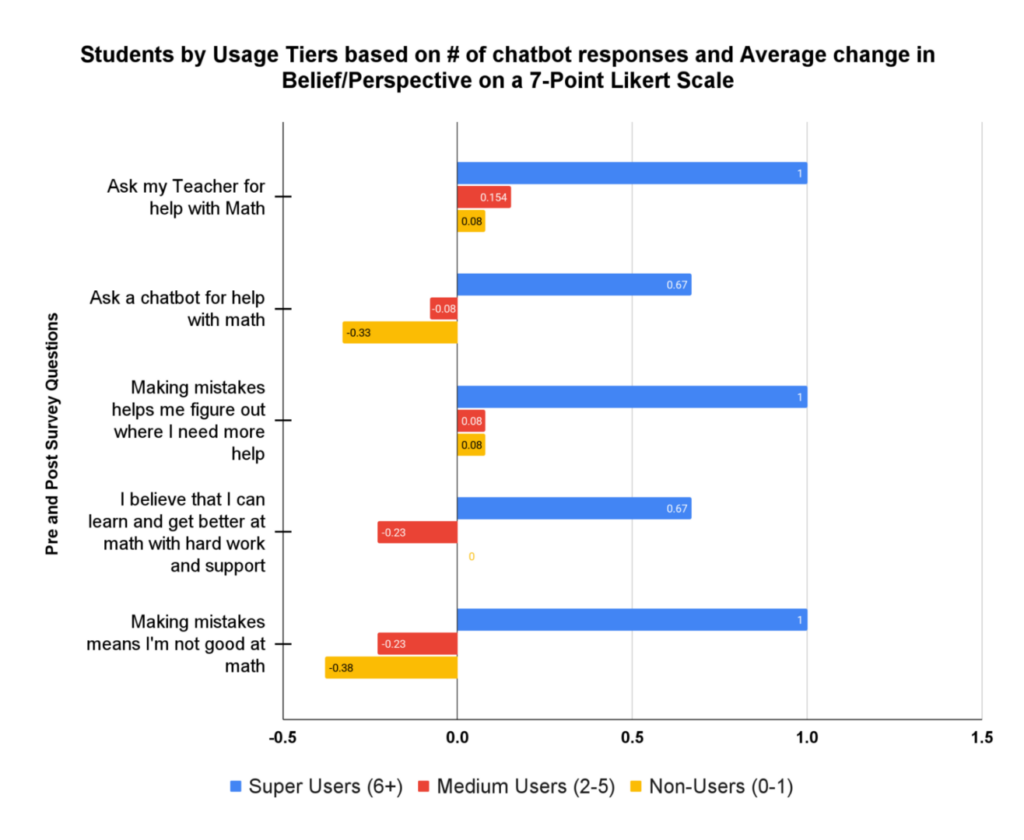

To rekindle math confidence, EdLight embedded messages about reframing struggle, normalizing mistakes, and encouraging help-seeking into Ember, an AI chatbot responding to students’ handwritten math work. The project reached 114 students at KIPP SoCal and measured impact with pre/post surveys and transcript analysis.

When positive math narratives were enacted through AI feedback immediately after students submitted their daily handwritten work, students’ beliefs about the value of mistakes and help-seeking shifted in measurable ways.

The strongest changes occurred among super users (6+ interactions with Ember) who experienced these narratives repeatedly in moments of real struggle.

Among super users(on a 7-point likert scale):

- +1.00 increase in seeing mistakes as helpful for learning

- +1.00 increase in rejecting the belief that mistakes signal low ability

- +1.00 increase in willingness to ask teachers for help

Individual student journeys reinforced these patterns:

- Student C no longer described feeling frustrated and instead described math as “challenging in a good way”

- Student D reported excitement about math for the first time post-intervention

- Students with limited or no exposure to Ember showed flat or negative movement on the same measures.

Together, these findings suggest that narrative recommendations delivered by AI chatbot are most impactful when students repeatedly experience them enacted in response to their own work, reshaping how struggle is interpreted in real time.

Translating research into student-facing AI demanded exceptional care. It began with non-interactive nudges, progressed to closed-response conversations, and conducted regular transcript reviews. This human-in-the-loop approach kept Ember’s responses emotionally in tune, contextually grounded, and worthy of student trust, establishing a best practice for responsible narrative delivery at scale.

FHI 360

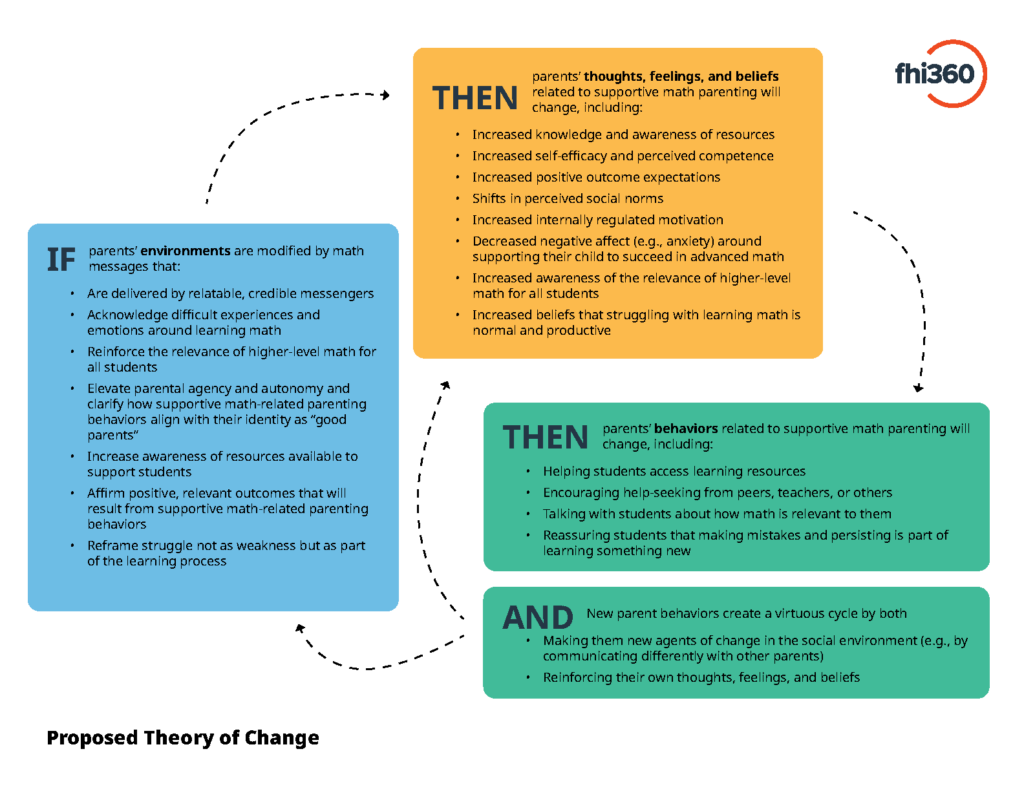

You Make Math Possible validates recommendations that help parents and caregivers of 6th–10th graders support math learning. Through iterative testing, the project builds practical, evidence-informed messages on struggle, relevance, and the importance of parental support to students’ math success.

*These findings reflect initial learnings from the midway point of the project.

Findings from advisory boards, focus groups, interviews, and surveys with families across four states showed several consistent patterns:

- Reframing struggle as growth increased hope and willingness to engage. Messages such as “Struggling is normal—and success is possible” reduced guilt and anxiety and helped parents view difficulty as part of learning, making them more likely to seek support.

- Affirming parents’ existing strengths boosted confidence. Asset-based phrases like “You have the power to help” made parents feel more capable and increased intentions to click on links or explore resources.

- Parents preferred concrete, clearly previewed resources, especially when messages highlighted specific, free supports (for example, “5 ways to help your child now”).

Language and trusted messengers shaped impact. Accessible terms such as “more complex” or “next-level math” worked better than “advanced” or “higher level math,” and teacher-delivered messages outperformed parent-delivered messages among parents who identify as “not math people” and families with lower incomes.

Building effective math messages requires an iterative design with parents, advisors, and the Community of Practice. This included focus groups, interviews, Advisory Board input, A/B testing, and multiple rounds of message design and revision, culminating in a sequenced messaging plan (linked below). Shifting narratives requires ongoing, connected messaging.

Research-tested messages that can be adapted for materials, testing, or outreach.

To strengthen parent-facing math messages.

Goblins

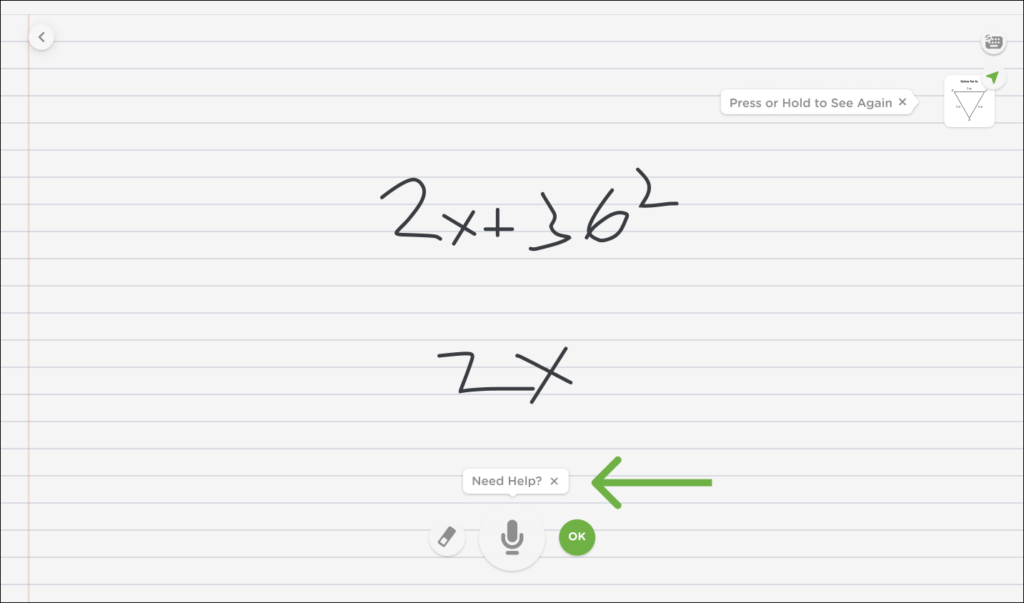

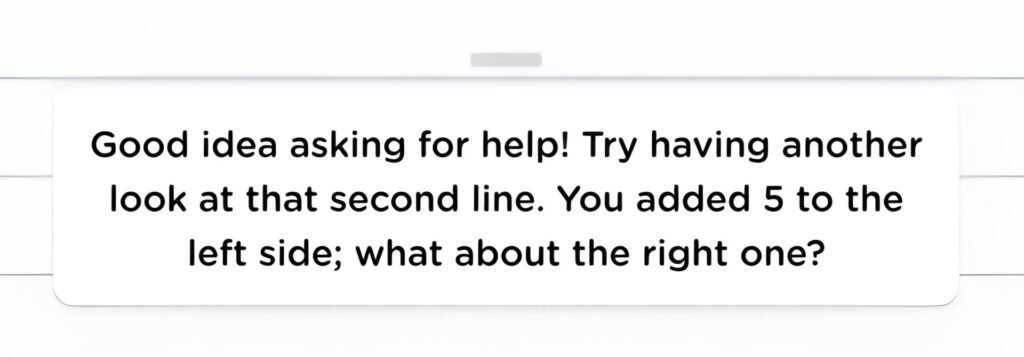

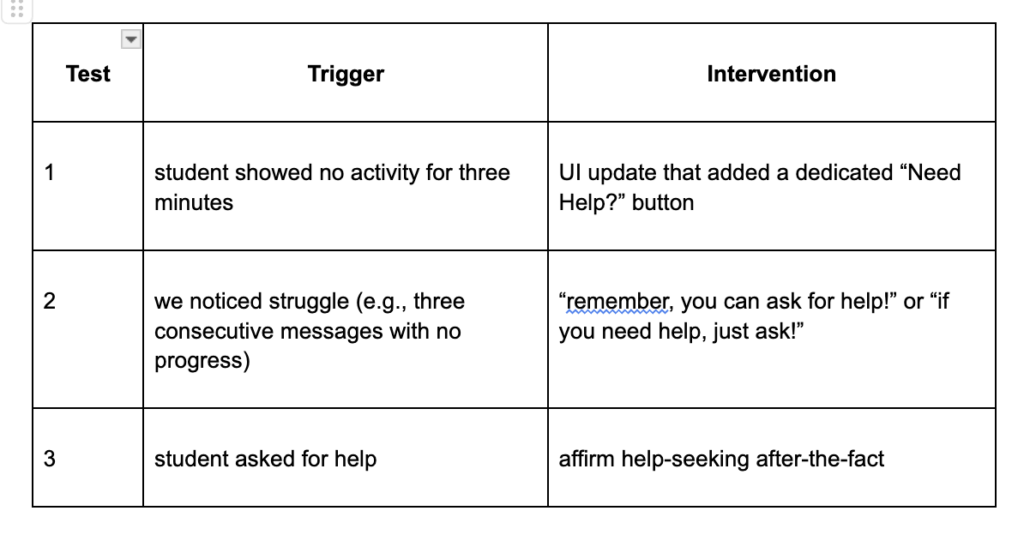

Goblins’ responses and User Interface were modified to encourage students to ask for help, either intervening when it was believed students were struggling, or affirming help-seeking after-the-fact. Students were found to be more likely to ask for help after receiving these interventions.

Among the 6 classrooms in New York City Title I schools, 142 students in total:

- In surveys, students were 21% more likely to strongly agree that “Asking for help was effective in learning today,” and 26% more likely to strongly agree that “I anticipate asking for help in math class when struggling in the future” after having been encouraged to seek help at least once by Goblins across any intervention.

- “Noticing struggle” proactively was a more impactful trigger, by a factor of 2, than responding to student inactivity with a “need help” button, as measured by likelihood to select “strongly agree” to either prompt after receiving either intervention (note: students had to have clicked on the help button).

- Learnings include heightened appreciation for the importance of students’ mindsets on par with that of their academic abilities. The two go hand-in-hand. Also, just as product designers can positively influence academic outcomes, they can also deliberately improve students’ mindsets, and that doing so feeds the other.

When surveying students, it’s critical to walk them through the question content before passing out the paper to them; many students suppose they know what they’re being asked, though they’re often mistaken.

The initial lift in measuring student behavior and messaging type from within an app is the biggest; once you’ve done the upfront work properly, you can slice things up into insights very easily.

The project performed 3 unique tests, each with a different trigger and intervention, across the 6 classes.

The classifier noticed the request for help, and Goblins affirmed the help successfully.

MyVillage Project

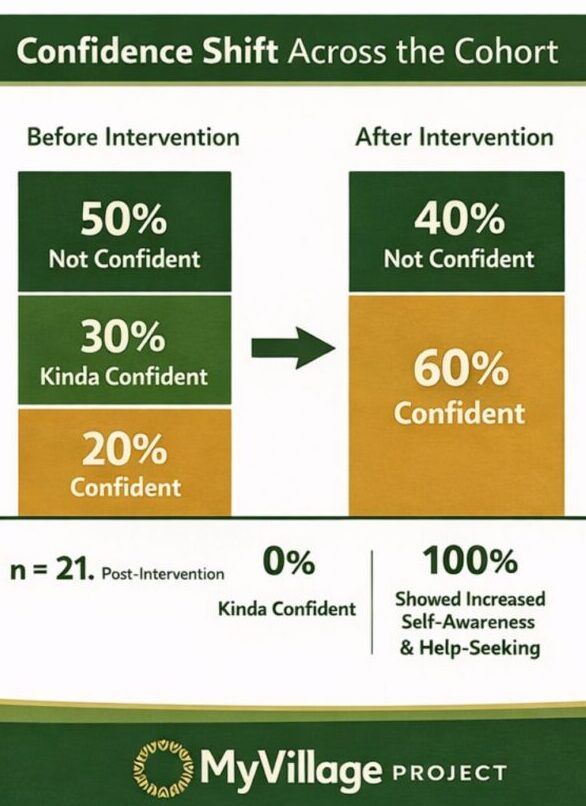

MyVillageProject tested a village model to shift math narratives via near-peers, caregivers, and student tools. Messages normalized confusion, treated mistakes as data, valued help-seeking over speed, and linked math to life. Shifts among 21 Florida middle-schoolers were tracked using reflections and AI.

Confidence gains (n=21):

Confidence increased across the cohort. At baseline, ~50% of students were not confident, ~30% were somewhat confident, and ~20% were confident. After the intervention, ~60% were coded confident, ~40% not confident, and no students remained “somewhat confident.”

Behavioral shifts:

Narrative change showed up as behavior change. All students demonstrated improved self-awareness, used mistakes to learn, persisted after mistakes, and increased help-seeking. Fourteen students (67%) explicitly rejected the belief that speed equals intelligence.

Speed reframing:

Although not an original Math Narrative Project belief target, student listening revealed many participants equated speed with intelligence. Early focus groups showed students felt “behind” or “bad at math” when they needed more time, linking pace—not understanding—to self-worth. We added messaging that decoupled speed from capability (e.g., “being good at math isn’t the same as being fast”), reducing time pressure and shame while supporting persistence, question-asking, and learning from mistakes.

High-leverage themes:

Normalize struggle, reframe mistakes as information (not shame), reduce speed pressure, and connect math to identity, goals, and independence.

Delivery & reinforcement:

Near-peer messengers increased credibility and moved students from silence to question-asking and persistence. Consistent reinforcement across texts, caregivers, community partners, and games increased stickiness.

Build a shared message set first, then repeat it weekly across channels (near-peer texts + caregiver prompts) so students hear consistent cues in different contexts. Pair narrative work with lightweight measurement—pre/post reflections and a simple confidence-labeling model—for fast feedback without burdening staff. Key lesson: instrumentation is the heavy lift; once set up, insights are easy to iterate.

See (student-built) math confidence model and how they used community affirmations + confidence labeling to measure narrative shift.

OKO

Piloted in a New York and a Georgia school, we integrated Math Narrative recommendations into OKO’s AI facilitation for small group collaborative math activities in order to elevate student agency, acknowledge emotions, affirm the value of mistakes, encourage help-seeking, and reframe struggle.

The most effective messaging focused on normalizing mistakes and encouraging help-seeking, shifting students from a “fixed mindset” of avoidance to a “growth mindset” of engagement.

- Beliefs: A 22% increase was observed in students believing they could fix errors with effort, alongside a 12% rise in the belief that asking questions is valuable.

- Behaviors: Student actions aligned with these belief gains. On OKO, Questions Per Minute rose steadily, and Collaboration Scores shifted from “Needs Intervention” (passive) to “Needs Guidance” (active).

- Resilience: A “proficiency bounce-back” was observed. When difficulty spiked, scores recovered rather than flatlining, confirming that messaging built the agency to persist through struggle.

- Product Loop: Crucially, this willingness to talk (Agency) fueled our product. It gave the AI the data it needed to provide accurate coaching, making the platform more impactful for students and teachers.

The Shift: Hard-coding MNP values into the scoring rubric moved feedback beyond praise (“Good job!”) to rewarding risk-taking—behaviors was measured with Community of Practice guidance.

1. Professional Development: Shifting beliefs and emotions about math learning requires consistent practice and reinforcement. To ensure “instructional coherence”, PD was enhanced to help teachers reinforce these behaviors alongside OKO.

2. Product: LLMs struggle with abstract guidelines; utilizing “Few Shots” examples with contrastive sets to prevent generic output and ensure the AI captures the necessary nuance.

The Emancipation Group

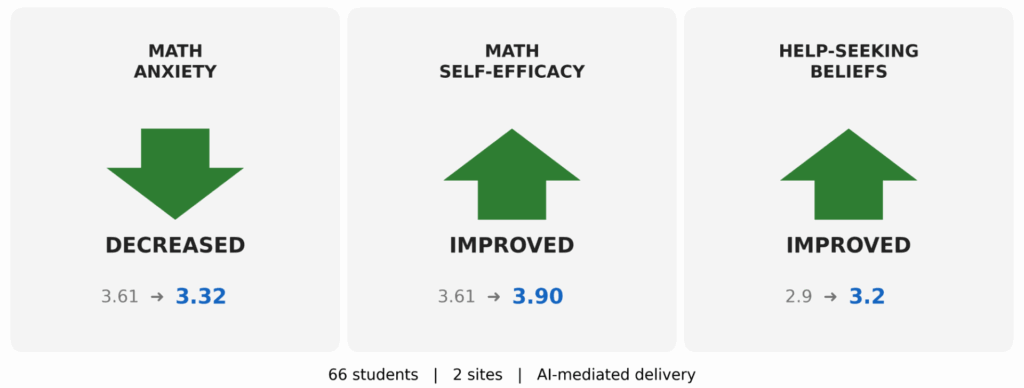

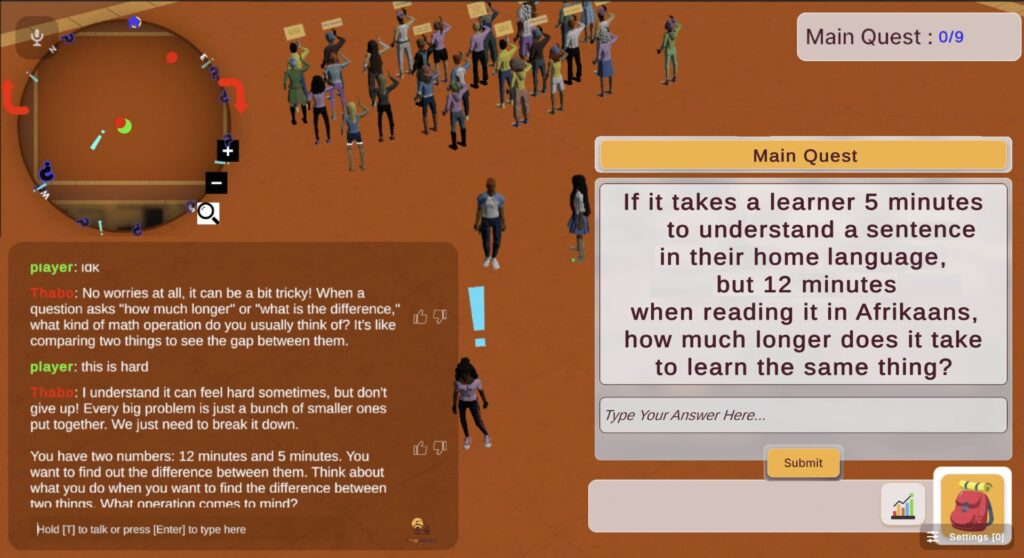

The Emancipation Group built an AI tutor inside a video game and taught it to recognize the exact moment a student was struggling with a math problem. At that moment, and only that moment, the AI delivered a targeted message about struggle and capability. Across 66 students in two cities, math anxiety dropped, confidence grew, and students became more willing to ask for help

Measured Outcomes

- Math Anxiety DECREASED: 3.61 to 3.32. Students came back to their next session carrying less dread. Once they had been supported through the hardest moment, the fear started to lift.

- Math Capability Beliefs IMPROVED: 3.61 to 3.90. Pushing through real difficulty, with the right support at the right moment, changed what students believed they could do.

- Help-Seeking Beliefs IMPROVED: 2.9 to 3.2. When the AI made support feel like a normal part of working through a problem, students stopped treating asking for help as a sign of weakness.

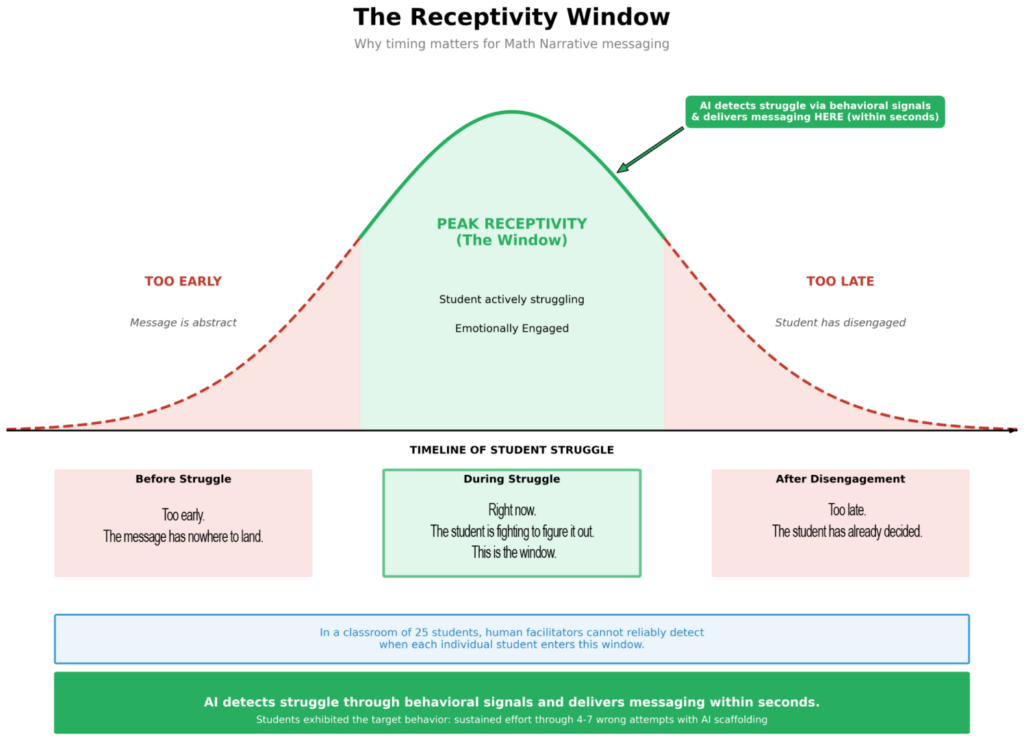

Insights for AI-Mediated Math Narrative Deployment

- The best moment to reach a student is the hardest one. AI detects the exact moment a student hits the wall and delivers a message within seconds, inside the peak receptivity window. That is when students are most open to a new belief about themselves. No teacher can reach every student at that moment, at that speed, at scale. AI can.

- The same message does not work for every student. When all students received identical encouragement, most improved but some shifted in the wrong direction. The AI has to read where a student starts before deciding what to say. Adaptation is not a feature. It is the foundation.

- No audience means no shame. In a classroom, getting a problem wrong in front of peers carries a social cost, and that cost stops students from trying again. Without an audience, students failed four to seven times in a row and kept going until they got it right. Six percent of students walked into a session hostile to the chatbot and left genuinely engaged in mathematics, within a single session. AI does not ask students to be braver. It removes the condition that demands bravery.

AI tracks what students do, not just what they say they feel. Students showed the hardest behavior to teach: they stayed with a problem through four to seven wrong answers and kept going until they got it right. That kind of behavioral data gives us real-time evidence of growth that a survey cannot capture on its own, because surveys ask students to name what they are experiencing before they fully understand it themselves. Self-reporting was used to confirm what the behavioral data was already showing.

Watch the game walkthrough